Data providers may be able to offer all the evidence needed to inform next steps straight “off the shelf,” so to speak. For decision makers that have the resources, these companies may be an ideal solution. It is also worth noting that there are data and analytics companies that aggregate and track data on clinical research programs, or consulting firms that will conduct a complete systematic review and produce recommendations about optimal next steps. If a primary goal of the evidence review is something like a gap analysis (ie, identifying important questions or clinical needs that have not yet been addressed by any existing studies) then these leaner approaches may be viable alternatives. Both of these approaches can be completed much more quickly than a full systematic review, sometimes taking only days or weeks rather than months. Or a scoping review may abandon an evidence assessment entirely, and instead focuses solely on providing insight about the size and breadth of the existing evidence base.

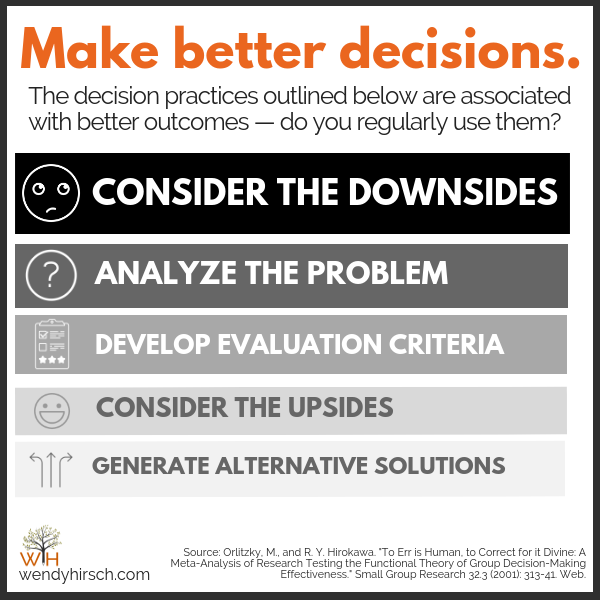

The rapid review, for example, will often use a similar search methodology to a full systematic review but will not go as deep into the data extraction or evidence-quality assessment. 4 Rapid reviews and scoping reviews are 2 methodologies that aim to strike a more time-sensitive balance between the thoroughness of an evidence assessment and gaining sufficient insight to act. The Silver Standards of Evidence Synthesisīut before describing our more radical solution, we should first acknowledge that there are a number of alternatives to the gold-standard systematic review. However, in what follows, we argue that this paradox can be resolved by rethinking some of the fundamental assumptions about the goals and products of systematic evidence reviews. But because time is precious and decisions about what studies to conduct and how to conduct them may not be able to wait a year, a comprehensive, up-to-date understanding is often out of reach. Thus, we arrive at one of the paradoxes of evidence-based decision making in clinical research and development: there is widespread agreement that better research will result from first having a comprehensive understanding of the existing evidence in hand. Or even if there is already a published systematic review to draw on, given the time it took the authors of that review to complete the work and get it through the peer-review and publication process, the underlying data are likely to be a year or more out-of-date. 3 As informative and valuable as this exercise might be, some research and development activities simply cannot be delayed a year or more. Unfortunately, conducting a gold-standard systematic review is a laborious and time-consuming process, often taking teams of experts more than 12 months to complete. 1,2 Or if there is no such review, then they should first conduct a systematic review to establish (a) that their proposed line of research has not already been done by someone else, and (b) that their research design decisions are aligned with the best standards and practices. In much of the trial methodology literature, for example, it is suggested that these decision makers should refer to the latest, relevant systematic review.

Whether we are referring to clinical development teams in the pharmaceutical industry planning a translational research program, research funding organizations deciding which project applications to fund, or academic investigators planning and designing their next study, the more that each of these groups understand about what has already been done, what worked well (or did not) in the past, and where the highest value opportunities are, the better.īut while it is easy to say that these kinds of decisions should be based on a comprehensive understanding of the evidence, it is far harder to say exactly how this should be accomplished. Indeed, in the era of evidence-based medicine, this prescription should seem axiomatic. It is easy to say that decisions about planning and design for clinical research should be informed by a comprehensive understanding of the existing evidence. Spencer Phillips Hey, PhD, Harvard Medical School, Boston, MA, USA, and Chief Executive Officer, Prism Analytic Technologies, Cambridge, MA, USA Joël Kuiper, Chief Technology Officer, Prism Analytic Technologies, Cambridge, MA, USA Cliff Fleck, Head of Communications, Prism Analytic Technologies, Cambridge, MA, USA

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed